Evadir obstáculos con reinforcement learning para robots móviles

Este ejemplo utiliza reinforcement learning basado en el gradiente de políticas determinísticas profundo (DDPG) para desarrollar una estrategia que permita a un robot móvil evadir obstáculos. Para ver un breve resumen del algoritmo DDPG, consulte Deep Deterministic Policy Gradient (DDPG) Agent (Reinforcement Learning Toolbox).

Este escenario de ejemplo entrena a un robot móvil para evadir obstáculos dadas las lecturas del sensor de distancia que detectan obstáculos en el mapa. El objetivo del algoritmo de reinforcement learning es aprender qué controles (velocidad lineal y angular) debe utilizar el robot para evadir colisiones contra obstáculos. Este ejemplo utiliza un mapa de ocupación de un entorno conocido para generar lecturas del sensor de distancia, detectar obstáculos y comprobar las colisiones que pueda sufrir el robot. Las lecturas del sensor de distancia son las observaciones para el agente DDPG y los controles de velocidad lineal y angular son la acción.

Cargar mapa

Cargue una matriz del mapa, simpleMap, que representa el entorno para el robot.

load exampleMaps simpleMap load exampleHelperOfficeAreaMap office_area_map mapMatrix = simpleMap; mapScale = 1;

Parámetros del sensor de distancia

Luego, configure un objeto rangeSensor que simula un sensor de distancia con ruido. El agente considera las lecturas del sensor de distancia como observaciones. Defina las posiciones angulares de las lecturas de distancia, la distancia máxima y los parámetros de ruido.

scanAngles = -3*pi/8:pi/8:3*pi/8; maxRange = 12; lidarNoiseVariance = 0.1^2; lidarNoiseSeeds = randi(intmax,size(scanAngles));

Parámetros del robot

La acción del agente es un vector bidimensional donde y son las velocidades lineal y angular del robot. El agente DDPG utiliza entradas normalizadas para las velocidades angular y lineal, lo que significa que las acciones del agente son un escalar entre -1 y 1, que se multiplica por los parámetros maxLinSpeed y maxAngSpeed para obtener el control real. Especifique esta velocidad lineal y angular máxima.

Especifique también la posición inicial del robot como [x y theta].

% Max speed parameters maxLinSpeed = 0.3; maxAngSpeed = 0.3; % Initial pose of the robot initX = 17; initY = 15; initTheta = pi/2;

Mostrar el mapa y las posiciones del robot

Para visualizar las acciones del robot, cree una figura. Empiece mostrando el mapa de ocupación y represente la posición inicial del robot.

fig = figure("Name","simpleMap"); set(fig, "Visible","on"); ax = axes(fig); show(binaryOccupancyMap(mapMatrix),"Parent",ax); hold on plotTransforms([initX,initY,0],eul2quat([initTheta,0,0]),"MeshFilePath","groundvehicle.stl","View","2D"); light; hold off

![Figure simpleMap contains an axes object. The axes object with title Binary Occupancy Grid, xlabel X [meters], ylabel Y [meters] contains 5 objects of type patch, line, image.](avoidobstaclesusingreinforcementlearningformobilerobotsexample_01_es.png)

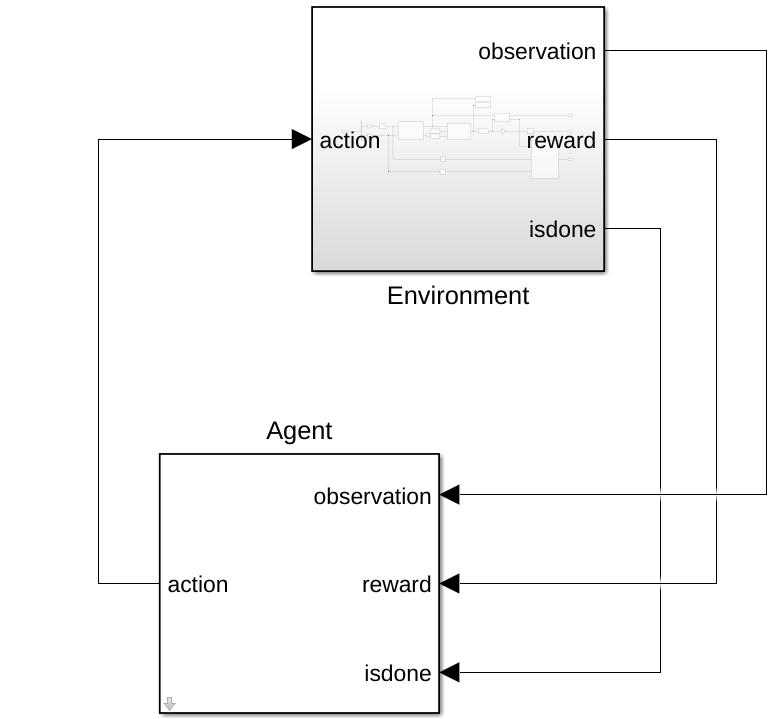

Interfaz del entorno

Cree un modelo de entorno que realice la acción y proporcione las señales de observación y recompensa. Especifique el nombre del modelo de ejemplo proporcionado, exampleHelperAvoidObstaclesMobileRobot, los parámetros de tiempo de simulación y el nombre del bloque del agente.

mdl = "exampleHelperAvoidObstaclesMobileRobot"; Tfinal = 100; sampleTime = 0.1; agentBlk = mdl + "/Agent";

Abra el modelo.

open_system(mdl)

El modelo contiene los bloques Environment y Agent. El bloque Agent aún no está definido.

Dentro del bloque Environment Subsystem, debería ver un modelo para simular el robot y los datos del sensor. El subsistema capta la acción, genera la señal de observación basada en las lecturas del sensor de distancia y calcula la recompensa en función de la distancia a los obstáculos y el esfuerzo de los comandos de acción.

open_system(mdl + "/Environment")Defina los parámetros de observación, obsInfo, utilizando el objeto rlNumericSpec y proporcionando el límite inferior y superior para las lecturas de distancia con elementos suficientes para cada posición angular en el sensor de distancia.

obsInfo = rlNumericSpec([numel(scanAngles) 1],... "LowerLimit",zeros(numel(scanAngles),1),... "UpperLimit",ones(numel(scanAngles),1)*maxRange); numObservations = obsInfo.Dimension(1);

Defina los parámetros de acción, actInfo. La acción es el vector de comando de control, , normalizado a .

numActions = 2; actInfo = rlNumericSpec([numActions 1],... "LowerLimit",-1,... "UpperLimit",1);

Construya el objeto de interfaz del entorno utilizando rlSimulinkEnv (Reinforcement Learning Toolbox). Especifique el modelo, el nombre del bloque del agente, los parámetros de observación y los parámetros de acción. Establezca la función de restablecimiento de la simulación con exampleHelperRLAvoidObstaclesResetFcn. Esta función reinicia la simulación situando al robot en una nueva ubicación aleatoria para comenzar a evadir obstáculos.

env = rlSimulinkEnv(mdl,agentBlk,obsInfo,actInfo);

env.ResetFcn = @(in)exampleHelperRLAvoidObstaclesResetFcn(in,scanAngles,maxRange,mapMatrix);

env.UseFastRestart = "Off";Para ver otro ejemplo que configura un entorno de Simulink® para el entrenamiento, consulte Control Water Level in a Tank Using a DDPG Agent (Reinforcement Learning Toolbox).

Agente DDPG

Un agente DDPG aproxima la recompensa a largo plazo dadas las observaciones y las acciones utilizando una representación de la función del valor del crítico. Para crear el valor crítico, cree primero una red neuronal profunda con dos entradas, la observación y la acción, y una salida. Para más información sobre cómo crear una representación de la función de valor de una red neuronal profunda, consulte Create Actors, Critics, and Policy Objects (Reinforcement Learning Toolbox).

statePath = [

featureInputLayer(numObservations, "Normalization","none","Name","State")

fullyConnectedLayer(50,"Name","CriticStateFC1")

reluLayer("Name","CriticRelu1")

fullyConnectedLayer(25,"Name","CriticStateFC2")];

actionPath = [

featureInputLayer(numActions,"Normalization","none","Name","Action")

fullyConnectedLayer(25,"Name","CriticActionFC1")];

commonPath = [

additionLayer(2,"Name","add")

reluLayer("Name","CriticCommonRelu")

fullyConnectedLayer(1,"Name","CriticOutput")];

criticNetwork = layerGraph();

criticNetwork = addLayers(criticNetwork,statePath);

criticNetwork = addLayers(criticNetwork,actionPath);

criticNetwork = addLayers(criticNetwork,commonPath);

criticNetwork = connectLayers(criticNetwork,"CriticStateFC2","add/in1");

criticNetwork = connectLayers(criticNetwork,"CriticActionFC1","add/in2");

criticNetwork = dlnetwork(criticNetwork);Luego, especifique las opciones para el optimizador crítico utilizando rlOptimizerOptions.

Por último, cree la representación del crítico utilizando la red neuronal profunda y las opciones especificadas. También debe indicar las especificaciones de acción y observación del crítico, que se obtiene de la interfaz del entorno. Para obtener más información, consulte rlQValueFunction (Reinforcement Learning Toolbox).

criticOptions = rlOptimizerOptions("LearnRate",1e-3,"L2RegularizationFactor",1e-4,"GradientThreshold",1); critic = rlQValueFunction(criticNetwork,obsInfo,actInfo,"ObservationInputNames","State","ActionInputNames","Action");

Un agente DDPG decide qué acción tomar dadas las observaciones utilizando una representación de actor. Para crear el actor, cree primero una red neuronal profunda con una entrada, la observación, y una salida, la acción.

Por último, construya el actor de forma similar al crítico. Para obtener más información, consulte rlContinuousDeterministicActor (Reinforcement Learning Toolbox).

actorNetwork = [

featureInputLayer(numObservations,"Normalization","none","Name","State")

fullyConnectedLayer(50,"Name","actorFC1")

reluLayer("Name","actorReLU1")

fullyConnectedLayer(50, "Name","actorFC2")

reluLayer("Name","actorReLU2")

fullyConnectedLayer(2, "Name","actorFC3")

tanhLayer("Name","Action")];

actorNetwork = dlnetwork(actorNetwork);

actorOptions = rlOptimizerOptions("LearnRate",1e-4,"L2RegularizationFactor",1e-4,"GradientThreshold",1);

actor = rlContinuousDeterministicActor(actorNetwork,obsInfo,actInfo);Crear un objeto agente DDPG

Especifique las opciones del agente.

agentOpts = rlDDPGAgentOptions(... "SampleTime",sampleTime,... "ActorOptimizerOptions",actorOptions,... "CriticOptimizerOptions",criticOptions,... "DiscountFactor",0.995, ... "MiniBatchSize",128, ... "ExperienceBufferLength",1e6); agentOpts.NoiseOptions.Variance = 0.1; agentOpts.NoiseOptions.VarianceDecayRate = 1e-5;

Cree el objeto rlDDPGAgent. La variable obstacleAvoidanceAgent se utiliza en el modelo para el bloque Agent.

obstacleAvoidanceAgent = rlDDPGAgent(actor,critic,agentOpts);

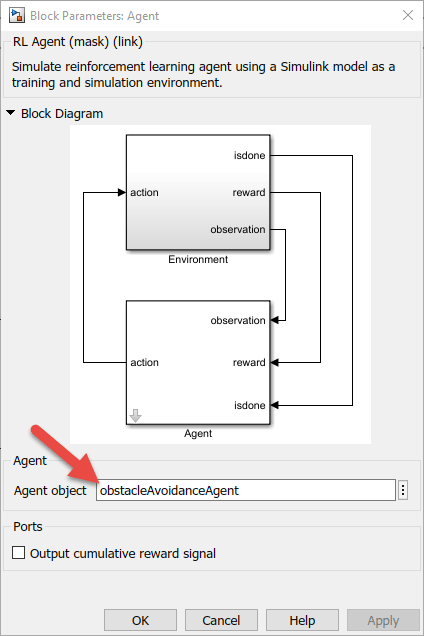

open_system(mdl + "/Agent")

Recompensa

La función de recompensa para el agente se modela como se muestra.

El agente obtiene la recompensa por evadir el obstáculo más próximo, lo que minimiza el peor escenario posible. Además, el agente recibe una recompensa positiva por velocidades lineales más altas y recibe una recompensa negativa por velocidades angulares más altas. Esta estrategia de recompensa ayuda a que el agente no circule en círculos. Ajustar las recompensas es clave para entrenar adecuadamente a un agente, de modo que las recompensas varíen en función de la aplicación.

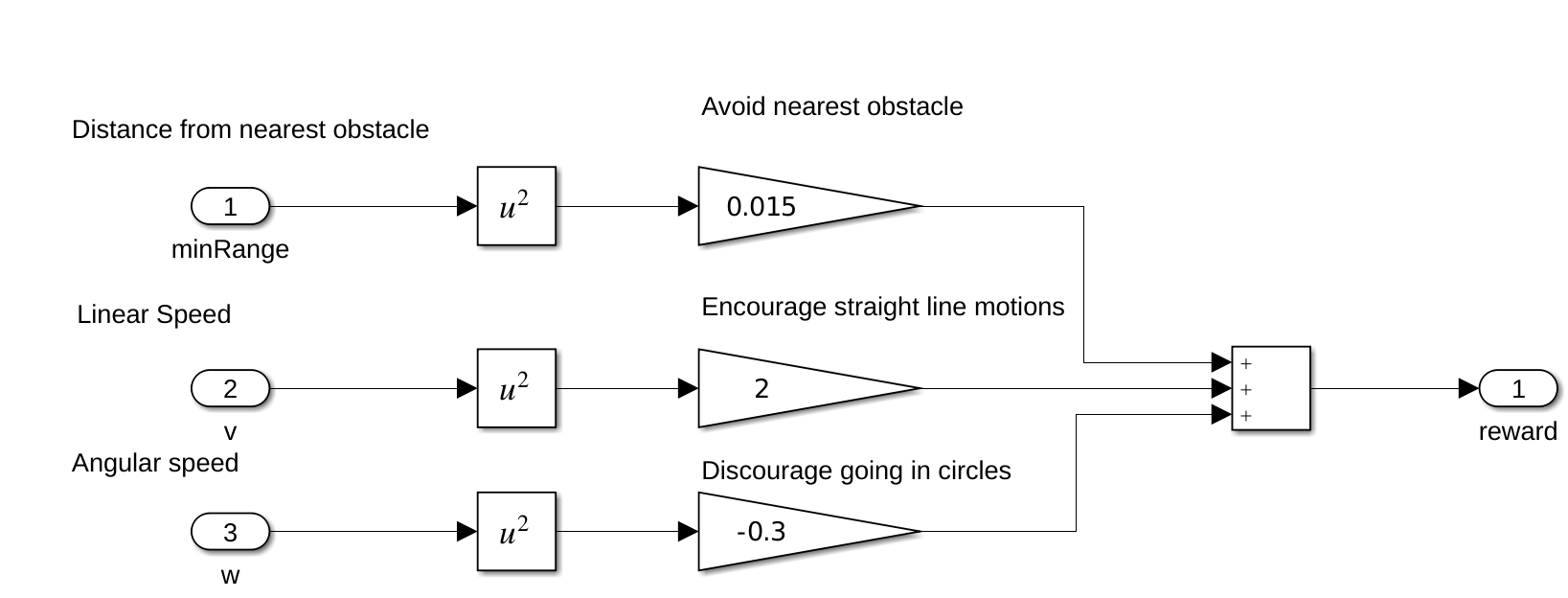

Entrenar al agente

Para entrenar al agente, especifique primero las opciones de entrenamiento. Para este ejemplo, utilice las siguientes opciones:

Entrenar durante un máximo de

10000episodios y que cada episodio dure un máximo demaxStepsunidades de tiempo.Mostrar el progreso del entrenamiento en el cuadro de diálogo Episode Manager (establecer la opción

Plots) y habilitar la visualización de la línea de comandos (establecer la opciónVerboseen true).Detener el entrenamiento cuando el agente reciba una recompensa promedio acumulativa superior a 400 en cincuenta episodios consecutivos.

Para obtener más información, consulte rlTrainingOptions (Reinforcement Learning Toolbox).

maxEpisodes = 10000; maxSteps = ceil(Tfinal/sampleTime); trainOpts = rlTrainingOptions(... "MaxEpisodes",maxEpisodes, ... "MaxStepsPerEpisode",maxSteps, ... "ScoreAveragingWindowLength",50, ... "StopTrainingCriteria","AverageReward", ... "StopTrainingValue",400, ... "Verbose", true, ... "Plots","training-progress");

Entrene al agente utilizando la función train (Reinforcement Learning Toolbox). El entrenamiento es un proceso computacionalmente intensivo que tarda varios minutos en completarse. Para ahorrar tiempo durante la ejecución de este ejemplo, cargue un agente preentrenado estableciendo doTraining en false. Para entrenar al agente por su cuenta, establezca doTraining en true.

doTraining = false; % Toggle this to true for training. if doTraining % Train the agent. trainingStats = train(obstacleAvoidanceAgent,env,trainOpts); else % Load pretrained agent for the example. load exampleHelperAvoidObstaclesAgent obstacleAvoidanceAgent end

Reinforcement Learning Episode Manager puede utilizarse para realizar un seguimiento del progreso del entrenamiento por episodios. A medida que aumenta el número de episodios, desea que aumente el valor de la recompensa.

Realizar la simulación

Utilice el agente entrenado para simular el desplazamiento del robot en el mapa y evadir obstáculos durante 200 segundos.

set_param("exampleHelperAvoidObstaclesMobileRobot","StopTime","200"); out = sim("exampleHelperAvoidObstaclesMobileRobot.slx");

Visualizar

Para visualizar la simulación del desplazamiento del robot por el entorno con las lecturas del sensor de distancia, utilice el helper, exampleHelperAvoidObstaclesPosePlot.

for i = 1:5:size(out.range,3) u = out.pose(i,:); r = out.range(:,:,i); exampleHelperAvoidObstaclesPosePlot(u,mapMatrix,mapScale,r,scanAngles,ax); end

![Figure simpleMap contains an axes object. The axes object with title Binary Occupancy Grid, xlabel X [meters], ylabel Y [meters] contains 6 objects of type patch, line, image. One or more of the lines displays its values using only markers](avoidobstaclesusingreinforcementlearningformobilerobotsexample_06_es.png)

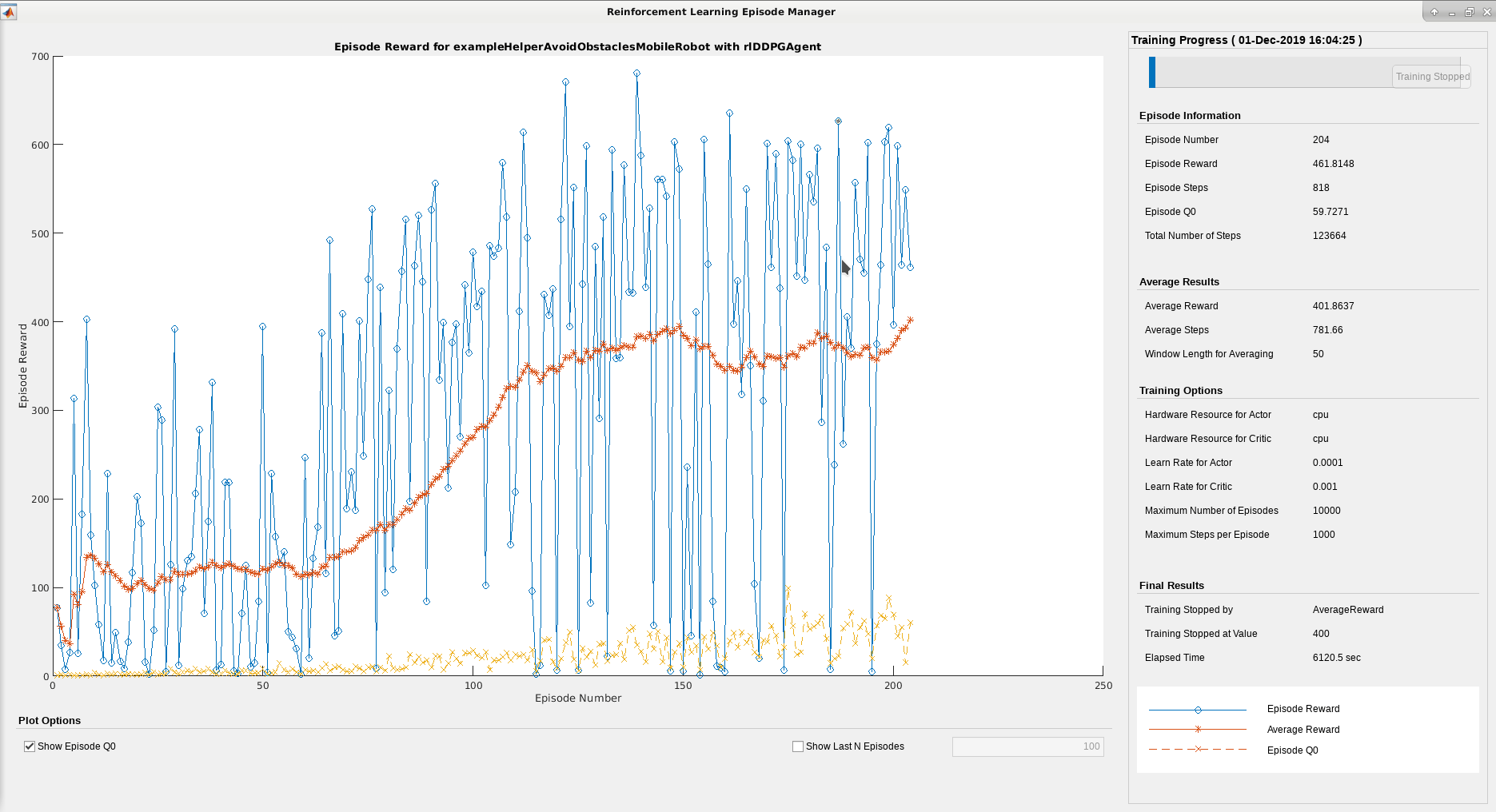

Extensibilidad

Ahora puede utilizar este agente para simular el desplazamiento en un mapa diferente. Otro mapa generado a partir de escaneos de LiDAR de un entorno de oficinas se utiliza con el mismo modelo entrenado. Este mapa representa un escenario más realista para aplicar el modelo entrenado después del entrenamiento.

Cambiar el mapa

mapMatrix = office_area_map.occupancyMatrix > 0.5; mapScale = 10; initX = 20; initY = 30; initTheta = 0; fig = figure("Name","office_area_map"); set(fig,"Visible","on"); ax = axes(fig); show(binaryOccupancyMap(mapMatrix,mapScale),"Parent",ax); hold on plotTransforms([initX,initY,0],eul2quat([initTheta, 0, 0]),"MeshFilePath","groundvehicle.stl","View","2D"); light; hold off

![Figure office_area_map contains an axes object. The axes object with title Binary Occupancy Grid, xlabel X [meters], ylabel Y [meters] contains 5 objects of type patch, line, image.](avoidobstaclesusingreinforcementlearningformobilerobotsexample_07_es.png)

Realizar la simulación

Simule la trayectoria del robot a través del nuevo mapa. Restablezca el tiempo de simulación por si fuera necesario reentrenar y reajustare al agente más adelante.

out = sim("exampleHelperAvoidObstaclesMobileRobot.slx"); set_param("exampleHelperAvoidObstaclesMobileRobot","StopTime","inf");

Visualizar

for i = 1:5:size(out.range,3) u = out.pose(i,:); r = out.range(:,:,i); exampleHelperAvoidObstaclesPosePlot(u,mapMatrix,mapScale,r,scanAngles,ax); end

![Figure office_area_map contains an axes object. The axes object with title Binary Occupancy Grid, xlabel X [meters], ylabel Y [meters] contains 6 objects of type patch, line, image. One or more of the lines displays its values using only markers](avoidobstaclesusingreinforcementlearningformobilerobotsexample_08_es.png)