importNetworkFromPyTorch

Sintaxis

Descripción

Add-On Required: Esta funcionalidad requiere el

net = importNetworkFromPyTorch(modelfile)modelfile. La función devuelve la red net como un objeto dlnetwork sin inicializar.

importNetworkFromPyTorch requiere el paquete de soporte Deep Learning Toolbox™ Converter for PyTorch Models. Si no se ha instalado este paquete de soporte, importNetworkFromPyTorch proporciona un enlace de descarga.

Nota

La función importNetworkFromPyTorch puede generar una capa personalizada al importar una capa de PyTorch. Para obtener más información, consulte Algoritmos. Las funciones guardan las capas personalizadas generadas en el espacio de nombres +modelfile.

net = importNetworkFromPyTorch(modelfile,Name=Value)Namespace="CustomLayers" guarda las capas personalizadas generadas y las funciones asociadas en el espacio de nombres +CustomLayers de la carpeta actual. Si se ha especificado el argumento nombre-valor PyTorchInputSizes, la función podría devolver la red net como un objeto dlnetwork inicializado.

Para obtener más información sobre cómo rastrear un modelo de PyTorch, consulte https://pytorch.org/docs/stable/generated/torch.jit.trace.html.

Ejemplos

Importe un modelo de PyTorch preentrenado y rastreado como un objeto dlnetwork sin inicializar. Después, añada una capa de entrada en la red importada.

En este ejemplo se importa el modelo MNASNet (Copyright© Soumith Chintala 2016) de PyTorch. MNASNet es un modelo de clasificación de imágenes que se entrena con imágenes de la base de datos de ImageNet. Descargue el archivo mnasnet1_0, que tiene un tamaño aproximado de 17 MB, desde el sitio web de MathWorks.

modelfile = matlab.internal.examples.downloadSupportFile("nnet", ... "data/PyTorchModels/mnasnet1_0.pt");

Importe el modelo MNASNet usando la función importNetworkFromPyTorch. La función importa el modelo como un objeto dlnetwork sin inicializar sin capa de entrada. El software muestra una advertencia con información sobre el número de capas de entrada, el tipo de capa de entrada que se desea añadir y cómo añadir una capa de entrada.

net = importNetworkFromPyTorch(modelfile)

Warning: Network was imported as an uninitialized dlnetwork. Before using the network, add input layer(s): % Create imageInputLayer for the network input at index 1: inputLayer1 = imageInputLayer(<inputSize1>, Normalization="none"); % Add input layers to the network and initialize: net = addInputLayer(net, inputLayer1, Initialize=true);

net =

dlnetwork with properties:

Layers: [3×1 nnet.cnn.layer.Layer]

Connections: [2×2 table]

Learnables: [210×3 table]

State: [104×3 table]

InputNames: {'TopLevelModule:layers'}

OutputNames: {'TopLevelModule:classifier'}

Initialized: 0

View summary with summary.

Especifique el tamaño de entrada de la red importada y cree una capa de entrada de imágenes. Después, añada la capa de entrada de imágenes a la red importada e inicialice la red con la función addInputLayer.

InputSize = [224 224 3];

inputLayer = imageInputLayer(InputSize,Normalization="none");

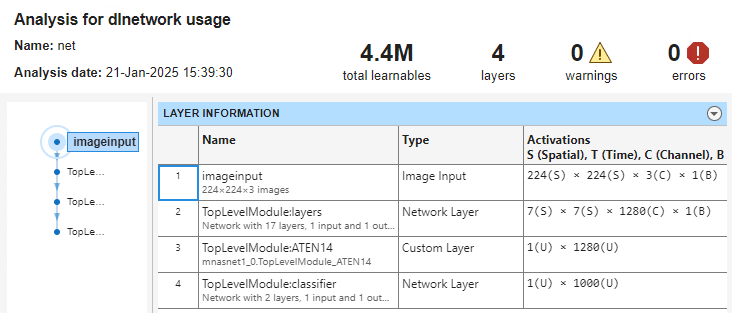

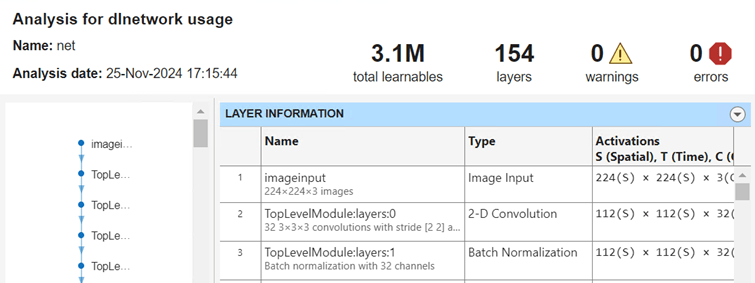

net = addInputLayer(net,inputLayer,Initialize=true);Analice la red importada y visualice la capa de entrada. La red se puede usar para hacer predicciones.

analyzeNetwork(net)

Importe un modelo de PyTorch preentrenado y rastreado como un objeto dlnetwork inicializado usando el argumento nombre-valor PyTorchInputSizes.

En este ejemplo se importa el modelo MNASNet (Copyright© Soumith Chintala 2016) de PyTorch. MNASNet es un modelo de clasificación de imágenes que se entrena con imágenes de la base de datos de ImageNet. Descargue el archivo mnasnet1_0.pt, que tiene un tamaño aproximado de 17 MB, desde el sitio web de MathWorks.

modelfile = matlab.internal.examples.downloadSupportFile("nnet", ... "data/PyTorchModels/mnasnet1_0.pt");

Importe el modelo MNASNet usando la función importNetworkFromPyTorch con el argumento nombre-valor PyTorchInputSizes. Sabemos que una imagen en color de 224x224 es un tamaño de entrada válido para este modelo de PyTorch. El software crea y añade automáticamente la capa de entrada para un lote de imágenes. Esto permite importar la red como una red inicializada usando una sola línea de código.

net = importNetworkFromPyTorch(modelfile,PyTorchInputSizes=[NaN,3,224,224])

net =

dlnetwork with properties:

Layers: [4×1 nnet.cnn.layer.Layer]

Connections: [3×2 table]

Learnables: [210×3 table]

State: [104×3 table]

InputNames: {'InputLayer1'}

OutputNames: {'TopLevelModule:classifier'}

Initialized: 1

View summary with summary.

La red se puede usar para hacer predicciones.

Importe un modelo de PyTorch preentrenado y rastreado como un objeto dlnetwork sin inicializar. Después, inicialice la red importada.

En este ejemplo se importa el modelo MNASNet (Copyright© Soumith Chintal 2016) de PyTorch. MNASNet es un modelo de clasificación de imágenes que se entrena con imágenes de la base de datos de ImageNet. Descargue el archivo mnasnet1_0, que tiene un tamaño aproximado de 17 MB, desde el sitio web de MathWorks.

modelfile = matlab.internal.examples.downloadSupportFile("nnet", ... "data/PyTorchModels/mnasnet1_0.pt");

Importe el modelo MNASNet usando la función importNetworkFromPyTorch. La función importa el modelo como un objeto dlnetwork sin inicializar.

net = importNetworkFromPyTorch(modelfile)

Warning: Network was imported as an uninitialized dlnetwork. Before using the network, add input layer(s): % Create imageInputLayer for the network input at index 1: inputLayer1 = imageInputLayer(<inputSize1>, Normalization="none"); % Add input layers to the network and initialize: net = addInputLayer(net, inputLayer1, Initialize=true);

net =

dlnetwork with properties:

Layers: [3×1 nnet.cnn.layer.Layer]

Connections: [2×2 table]

Learnables: [210×3 table]

State: [104×3 table]

InputNames: {'TopLevelModule:layers'}

OutputNames: {'TopLevelModule:classifier'}

Initialized: 0

View summary with summary.

net es un objeto dlnetwork que consta de una sola capa networkLayer que contiene una red anidada. Especifique el tamaño de entrada de net y cree un objeto dlarray aleatorio que represente la entrada de la red. El formato de datos del objeto dlarray debe tener las dimensiones "SSCB" (espacial, espacial, canal, lote) para representar una entrada de imágenes 2D. Para obtener más información, consulte Data Formats for Prediction with dlnetwork.

InputSize = [224 224 3];

X = dlarray(rand(InputSize),"SSCB");Inicialice los parámetros que se pueden aprender de la red importada usando la función initialize.

net = initialize(net,X);

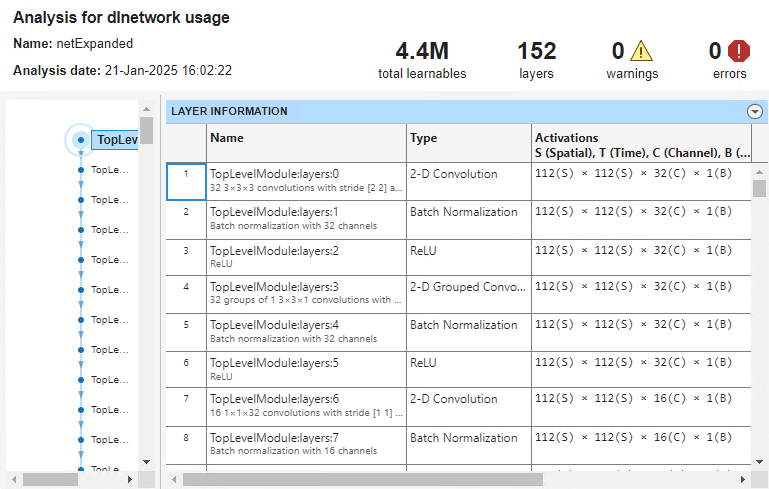

De este modo, la red importada está lista para hacer predicciones. Expanda networkLayer usando la función expandLayers y analice la red importada.

netExpanded = expandLayers(net)

netExpanded =

dlnetwork with properties:

Layers: [152×1 nnet.cnn.layer.Layer]

Connections: [161×2 table]

Learnables: [210×3 table]

State: [104×3 table]

InputNames: {'TopLevelModule:layers:0'}

OutputNames: {'TopLevelModule:classifier:1:ATEN12'}

Initialized: 1

View summary with summary.

analyzeNetwork(netExpanded)

Importe un modelo de PyTorch preentrenado y rastreado como un objeto dlnetwork sin inicializar para clasificar una imagen.

En este ejemplo se importa el modelo MNASNet (Copyright© Soumith Chintala 2016) de PyTorch. MNASNet es un modelo de clasificación de imágenes que se entrena con imágenes de la base de datos de ImageNet. Descargue el archivo mnasnet1_0, que tiene un tamaño aproximado de 17 MB, desde el sitio web de MathWorks.

modelfile = matlab.internal.examples.downloadSupportFile("nnet", ... "data/PyTorchModels/mnasnet1_0.pt");

Importe el modelo MNASNet usando la función importNetworkFromPyTorch. La función importa el modelo como un objeto dlnetwork sin inicializar.

net = importNetworkFromPyTorch(modelfile)

Warning: Network was imported as an uninitialized dlnetwork. Before using the network, add input layer(s): % Create imageInputLayer for the network input at index 1: inputLayer1 = imageInputLayer(<inputSize1>, Normalization="none"); % Add input layers to the network and initialize: net = addInputLayer(net, inputLayer1, Initialize=true);

net =

dlnetwork with properties:

Layers: [3×1 nnet.cnn.layer.Layer]

Connections: [2×2 table]

Learnables: [210×3 table]

State: [104×3 table]

InputNames: {'TopLevelModule:layers'}

OutputNames: {'TopLevelModule:classifier'}

Initialized: 0

View summary with summary.

Especifique el tamaño de entrada de la red importada y cree una capa de entrada de imágenes. Después, añada la capa de entrada de imágenes a la red importada e inicialice la red con la función addInputLayer.

InputSize = [224 224 3];

inputLayer = imageInputLayer(InputSize,Normalization="none");

net = addInputLayer(net,inputLayer,Initialize=true);Lea la imagen que desea clasificar.

Im = imread("peppers.png");Cambie el tamaño de la imagen para que coincida con el tamaño de entrada de la red. Muestre la imagen.

InputSize = [224 224 3]; Im = imresize(Im,InputSize(1:2)); imshow(Im)

Las entradas de MNASNet requieren más procesamiento. Vuelva a escalar la imagen. Después, normalice la imagen restando la media de las imágenes de entrenamiento y dividiendo por la desviación estándar de las imágenes de entrenamiento. Para obtener más información, consulte Input Data Preprocessing.

Im = rescale(Im,0,1); meanIm = [0.485 0.456 0.406]; stdIm = [0.229 0.224 0.225]; Im = (Im - reshape(meanIm,[1 1 3]))./reshape(stdIm,[1 1 3]);

Convierta la imagen en un objeto dlarray. Dé formato a la imagen con las dimensiones "SSCB" (espacial, espacial, canal, lote).

Im_dlarray = dlarray(single(Im),"SSCB");Obtenga los nombres de clase de squeezenet, que también se entrena con las imágenes de ImageNet.

[~,ClassNames] = imagePretrainedNetwork("squeezenet");Clasifique la imagen y encuentre la etiqueta predicha.

prob = predict(net,Im_dlarray); [~,label_ind] = max(prob);

Muestre el resultado de la clasificación.

ClassNames(label_ind)

ans = "bell pepper"

Importe un modelo de PyTorch preentrenado y rastreado como un objeto dlnetwork sin inicializar. Después, encuentre las capas personalizadas que genera el software.

En este ejemplo se usa la función de ayuda findCustomLayers.

En este ejemplo se importa el modelo MNASNet (Copyright© Soumith Chintala 2016) de PyTorch. MNASNet es un modelo de clasificación de imágenes que se entrena con imágenes de la base de datos de ImageNet. Descargue el archivo mnasnet1_0, que tiene un tamaño aproximado de 17 MB, desde el sitio web de MathWorks.

modelfile = matlab.internal.examples.downloadSupportFile("nnet", ... "data/PyTorchModels/mnasnet1_0.pt");

Importe el modelo MNASNet usando la función importNetworkFromPyTorch. La función importa el modelo como un objeto dlnetwork sin inicializar.

net = importNetworkFromPyTorch(modelfile)

Warning: Network was imported as an uninitialized dlnetwork. Before using the network, add input layer(s): % Create imageInputLayer for the network input at index 1: inputLayer1 = imageInputLayer(<inputSize1>, Normalization="none"); % Add input layers to the network and initialize: net = addInputLayer(net, inputLayer1, Initialize=true);

net =

dlnetwork with properties:

Layers: [3×1 nnet.cnn.layer.Layer]

Connections: [2×2 table]

Learnables: [210×3 table]

State: [104×3 table]

InputNames: {'TopLevelModule:layers'}

OutputNames: {'TopLevelModule:classifier'}

Initialized: 0

View summary with summary.

net es un objeto dlnetwork que consta de una sola capa networkLayer que contiene una red anidada. Expanda las capas de red anidada con la función expandLayers.

net = expandLayers(net);

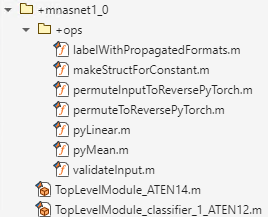

La función importNetworkFromPyTorch genera capas personalizadas para las capas de PyTorch que la función no puede convertir en capas o funciones de MATLAB integradas. Para obtener más información, consulte Algoritmos. El software guarda las capas personalizadas generadas automáticamente en el espacio de nombres +mnasnet1_0 de la carpeta actual y las funciones asociadas en el espacio de nombres interno +ops. Para ver las capas personalizadas y las funciones asociadas, inspeccione el espacio de nombres.

También puede encontrar los índices de las capas personalizadas generadas con la función de ayuda findCustomLayers. Muestre las capas personalizadas.

ind = findCustomLayers(net.Layers,'+mnasnet1_0')ind = 1×2

150 152

net.Layers(ind)

ans =

2×1 Layer array with layers:

1 'TopLevelModule:ATEN14' Custom Layer mnasnet1_0.TopLevelModule_ATEN14

2 'TopLevelModule:classifier:1:ATEN12' Custom Layer mnasnet1_0.TopLevelModule_classifier_1_ATEN12

Función de ayuda

La función de ayuda findCustomLayers devuelve un vector lógico correspondiente a los indices de las capas personalizadas que importNetworkFromPyTorch genera automáticamente.

function indices = findCustomLayers(layers,Namespace) s = what(['.' filesep Namespace]); indices = zeros(1,length(s.m)); for i = 1:length(layers) for j = 1:length(s.m) if strcmpi(class(layers(i)),[Namespace(2:end) '.' s.m{j}(1:end-2)]) indices(j) = i; end end end end

En este ejemplo se muestra cómo importar una red de PyTorch y entrenar la red para clasificar imágenes nuevas. Utilice la función importNetworkFromPytorch para importar la red como un objeto dlnetwork sin inicializar. Entrene la red con un bucle de entrenamiento personalizado.

En este ejemplo se usan las funciones de ayuda modelLoss, modelPredictions y preprocessMiniBatchPredictors.

En este ejemplo también se utiliza el archivo de soporte new_fcLayer. Para acceder al archivo de soporte, abra el ejemplo en Live Editor.

Cargar datos

Descomprima el conjunto de datos de MerchData, que contiene 75 imágenes. Cargue las nuevas imágenes como un almacén de datos de imágenes. La función imageDatastore etiqueta automáticamente las imágenes en función de los nombres de carpeta y almacena los datos como un objeto ImageDatastore. Divida los datos en conjuntos de datos de entrenamiento y de validación. Utilice el 70% de las imágenes para el entrenamiento y el 30% para la validación.

unzip("MerchData.zip");imds = imageDatastore("MerchData", ... IncludeSubfolders=true, ... LabelSource="foldernames"); [imdsTrain,imdsValidation] = splitEachLabel(imds,0.7);

La red usada en este ejemplo requiere imágenes de entrada de un tamaño de 224 por 224 por 3. Para cambiar automáticamente el tamaño de las imágenes de entrenamiento, utilice un almacén de datos de imágenes aumentado. Traslade aleatoriamente las imágenes hasta 30 píxeles en los ejes horizontal y vertical. El aumento de datos ayuda a evitar que la red se sobreajuste y memorice los detalles exactos de las imágenes de entrenamiento.

inputSize = [224 224 3]; pixelRange = [-30 30]; scaleRange = [0.9 1.1]; imageAugmenter = imageDataAugmenter(... RandXReflection=true, ... RandXTranslation=pixelRange, ... RandYTranslation=pixelRange, ... RandXScale=scaleRange, ... RandYScale=scaleRange); augimdsTrain = augmentedImageDatastore(inputSize(1:2),imdsTrain, ... DataAugmentation=imageAugmenter);

Para cambiar el tamaño de las imágenes de validación de forma automática sin realizar más aumentos de datos, utilice un almacén de datos de imágenes aumentadas sin especificar ninguna operación adicional de preprocesamiento.

augimdsValidation = augmentedImageDatastore(inputSize(1:2),imdsValidation);

Determine el número de clases de los datos de entrenamiento.

classes = categories(imdsTrain.Labels); numClasses = numel(classes);

Importar una red

Descargue el modelo MNASNet (Copyright© Soumith Chintala 2016) de PyTorch. MNASNet es un modelo de clasificación de imágenes que se entrena con imágenes de la base de datos de ImageNet. Descargue el archivo mnasnet1_0, que tiene un tamaño aproximado de 17 MB, desde el sitio web de MathWorks.

modelfile = matlab.internal.examples.downloadSupportFile("nnet", ... "data/PyTorchModels/mnasnet1_0.pt");

Importe el modelo MNASNet como un objeto dlnetwork sin inicializar usando la función importNetworkFromPyTorch.

net = importNetworkFromPyTorch(modelfile)

Warning: Network was imported as an uninitialized dlnetwork. Before using the network, add input layer(s): % Create imageInputLayer for the network input at index 1: inputLayer1 = imageInputLayer(<inputSize1>, Normalization="none"); % Add input layers to the network and initialize: net = addInputLayer(net, inputLayer1, Initialize=true);

net =

dlnetwork with properties:

Layers: [3×1 nnet.cnn.layer.Layer]

Connections: [2×2 table]

Learnables: [210×3 table]

State: [104×3 table]

InputNames: {'TopLevelModule:layers'}

OutputNames: {'TopLevelModule:classifier'}

Initialized: 0

View summary with summary.

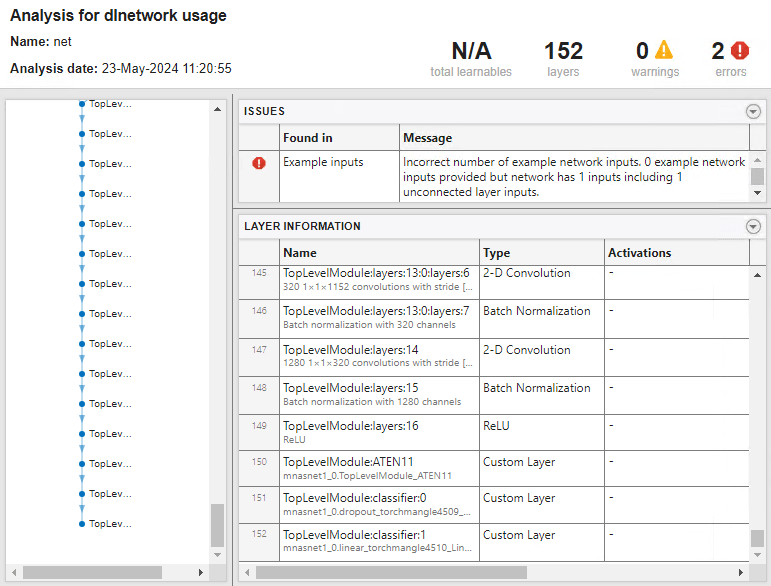

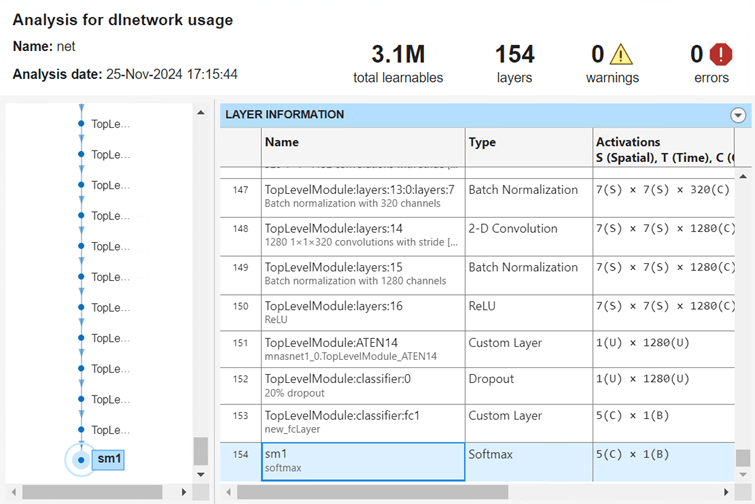

net es un objeto dlnetwork que consta de una sola capa networkLayer que contiene una red anidada. Expanda networkLayer usando la función expandLayers. Muestre la capa final de la red importada usando la función analyzeNetwork.

net = expandLayers(net); analyzeNetwork(net)

TopLevelModule:classifier:1:ATEN12 es una capa personalizada generada por la función importNetworkFromPyTorch y la última capa que se puede aprender de la red importada. Esta capa contiene información sobre cómo combinar las características que extrae la red en probabilidades de clase y un valor de pérdida.

Sustituir la capa final

Para volver a entrenar la red importada para clasificar imágenes nuevas, sustituya las capas finales por una nueva capa totalmente conectada. La nueva capa new_fclayer se adapta al nuevo conjunto de datos y también debe ser una capa personalizada porque tiene dos entradas.

Inicialice la capa new_fcLayer y sustituya la capa TopLevelModule:classifier:1:ATEN12 por new_fcLayer.

newLayer = new_fcLayer("TopLevelModule:classifier:fc1","Custom Layer", ... {'in'},{'out'},numClasses); net = replaceLayer(net,"TopLevelModule:classifier:1:ATEN12",newLayer);

Añada una capa softmax a la red y conecte la capa softmax a la nueva capa totalmente conectada.

net = addLayers(net,softmaxLayer(Name="sm1")); net = connectLayers(net,"TopLevelModule:classifier:fc1","sm1"); net.OutputNames = "sm1";

Añadir una capa de entrada

Añada una capa de entrada de imágenes a la red e inicialice la red.

inputLayer = imageInputLayer(inputSize,Normalization="none");

net = addInputLayer(net,inputLayer,Initialize=true);Analice la red. Visualice la primera capa y las capas finales.

analyzeNetwork(net)

Definir la función de pérdida del modelo

El entrenamiento de una red neuronal profunda es una tarea de optimización. Tratando una red neuronal como si fuera una función , donde es la entrada de la red y es el conjunto de parámetros que se pueden aprender, puede optimizar para que minimice parte del valor de pérdida en función de los datos de entrenamiento. Por ejemplo, optimice los parámetros que se pueden aprender de modo que, para entradas con los objetivos correspondientes , minimicen el error entre las predicciones y .

Cree la función modelLoss, que aparece en la sección Función de pérdida del modelo del ejemplo, que toma como entrada el objeto dlnetwork y un minilote de datos de entrada con los objetivos correspondientes. La función devuelve la pérdida y los gradientes de la pérdida con respecto a los parámetros que se pueden aprender y el estado de la red.

Especificar las opciones de entrenamiento

Entrene con un tamaño de minilote de 20 durante 15 épocas.

numEpochs = 15; miniBatchSize = 20;

Especifique las opciones para la optimización de SGDM. Especifique una tasa de aprendizaje inicial de 0,001 con un decaimiento de 0,005 y un momento de 0,9.

initialLearnRate = 0.001; decay = 0.005; momentum = 0.9;

Entrenar la red

Cree un objeto minibatchqueue que procese y gestione minilotes de imágenes durante el entrenamiento. Para cada minilote, siga estos pasos:

Utilice la función de preprocesamiento de minilotes personalizada

preprocessMiniBatch(definida al final de este ejemplo) para convertir las etiquetas en variables codificadas one-hot.Dé formato a los datos de imagen con las etiquetas de dimensión

"SSCB"(espacial, espacial, canal, lote). De forma predeterminada, el objetominibatchqueueconvierte los datos en objetosdlarraycon el tipo subyacentesingle. No dé formato a las etiquetas de clase.Entrene en una GPU, si se dispone de ella. De forma predeterminada, el objeto

minibatchqueueconvierte cada salida en un objetogpuArraysi hay una GPU disponible. Para utilizar una GPU se requiere una licencia de Parallel Computing Toolbox™ y un dispositivo GPU compatible. Para obtener información sobre los dispositivos compatibles, consulte GPU Computing Requirements (Parallel Computing Toolbox).

mbq = minibatchqueue(augimdsTrain,... MiniBatchSize=miniBatchSize,... MiniBatchFcn=@preprocessMiniBatch,... MiniBatchFormat=["SSCB" ""]);

Inicialice el parámetro de velocidad para el solver de gradiente descendente con momento (SGDM).

velocity = [];

Calcule el número total de iteraciones para monitorizar el progreso del entrenamiento.

numObservationsTrain = numel(imdsTrain.Files); numIterationsPerEpoch = ceil(numObservationsTrain/miniBatchSize); numIterations = numEpochs*numIterationsPerEpoch;

Inicialice el objeto trainingProgressMonitor. Dado que el cronómetro empieza cuando crea el objeto de monitorización, cree el objeto inmediatamente después del bucle de entrenamiento.

monitor = trainingProgressMonitor(Metrics="Loss",Info=["Epoch","LearnRate"],XLabel="Iteration");

Entrene la red con un bucle de entrenamiento personalizado. Para cada época, cambie el orden de los datos y pase en bucle por minilotes de datos. Para cada minilote, siga estos pasos:

Evalúe la pérdida, los gradientes y el estado del modelo utilizando las funciones

dlfevalymodelLoss, y actualice el estado de la red.Determine la tasa de aprendizaje para la programación de la tasa de aprendizaje de decaimiento basado en el tiempo.

Actualice los parámetros de red con la función

sgdmupdate.Actualice la pérdida, la tasa de aprendizaje y los valores de época en la monitorización del progreso del entrenamiento.

Detenga el proceso si la propiedad

Stopse ha establecido como verdadero. El valor de la propiedadStopdel objetoTrainingProgressMonitorcambia atruecuando hace clic en el botón Stop.

epoch = 0; iteration = 0; % Loop over epochs. while epoch < numEpochs && ~monitor.Stop epoch = epoch + 1; % Shuffle data. shuffle(mbq); % Loop over mini-batches. while hasdata(mbq) && ~monitor.Stop iteration = iteration + 1; % Read mini-batch of data. [X,T] = next(mbq); % Evaluate the model gradients, state, and loss using dlfeval and the % modelLoss function and update the network state. [loss,gradients,state] = dlfeval(@modelLoss,net,X,T); net.State = state; % Determine learning rate for time-based decay learning rate schedule. learnRate = initialLearnRate/(1 + decay*iteration); % Update the network parameters using the SGDM optimizer. [net,velocity] = sgdmupdate(net,gradients,velocity,learnRate,momentum); % Update the training progress monitor. recordMetrics(monitor,iteration,Loss=loss); updateInfo(monitor,Epoch=epoch,LearnRate=learnRate); monitor.Progress = 100*iteration/numIterations; end end

Clasificar imágenes de validación

Pruebe la precisión de clasificación del modelo comparando las predicciones en un conjunto de validación con las etiquetas verdaderas.

Después del entrenamiento, para hacer predicciones sobre nuevos datos no se requieren etiquetas. Cree un objeto minibatchqueue que contenga solo los predictores de los datos de prueba:

Para ignorar las etiquetas para las pruebas, establezca el número de salidas de la cola de minilotes en 1.

Especifique el mismo tamaño de minilote utilizado para el entrenamiento.

Preprocese los predictores mediante la función

preprocessMiniBatchPredictors, que se enumera al final del ejemplo.Para la salida única del almacén de datos, especifique el formato de los minilotes

"SSCB"(espacial, espacial, canal, lote).

numOutputs = 1; mbqTest = minibatchqueue(augimdsValidation,numOutputs, ... MiniBatchSize=miniBatchSize, ... MiniBatchFcn=@preprocessMiniBatchPredictors, ... MiniBatchFormat="SSCB");

Pase en bucle por los minilotes y clasifique las imágenes con la función modelPredictions, que se enumera al final del ejemplo.

YTest = modelPredictions(net,mbqTest,classes);

Evalúe la precisión de clasificación.

TTest = imdsValidation.Labels; accuracy = mean(TTest == YTest)

Visualice las predicciones en una gráfica de confusión. Los valores grandes de la diagonal indican predicciones precisas para la clase correspondiente. Los valores grandes fuera de la diagonal indican una fuerte confusión entre las clases correspondientes.

figure confusionchart(TTest,YTest)

Funciones de ayuda

Función de pérdida del modelo

La función modelLoss toma un objeto net de dlnetwork como entrada y un minilote de datos de entrada X con objetivos correspondientes T. La función devuelve la pérdida, los gradientes de la pérdida con respecto a los parámetros que se pueden aprender en net y el estado de la red. Para calcular los gradientes automáticamente, utilice la función dlgradient.

function [loss,gradients,state] = modelLoss(net,X,T) % Forward data through network. [Y,state] = forward(net,X); % Calculate cross-entropy loss. loss = crossentropy(Y,T); % Calculate gradients of loss with respect to learnable parameters. gradients = dlgradient(loss,net.Learnables); end

Función de predicciones del modelo

La función modelPredictions toma como entrada un objeto dlnetwork net, un minibatchqueue de datos de entrada mbq y las clases de red. La función calcula las predicciones del modelo iterando sobre todos los datos en el objeto minibatchqueue. La función utiliza la función onehotdecode para encontrar la clase predicha con la puntuación más alta.

function Y = modelPredictions(net,mbq,classes) Y = []; % Loop over mini-batches. while hasdata(mbq) X = next(mbq); % Make prediction. scores = predict(net,X); % Decode labels and append to output. labels = onehotdecode(scores,classes,1)'; Y = [Y; labels]; end end

Función de preprocesamiento de minilotes

La función preprocessMiniBatch preprocesa un minilote de predictores y etiquetas siguiendo estos pasos:

Preprocesa las imágenes usando la función

preprocessMiniBatchPredictors.Extrae los datos de la etiqueta del arreglo de celdas entrante y concatena el resultado en un arreglo categórico a lo largo de la segunda dimensión.

Hace una codificación one-hot de las etiquetas categóricas en arreglos numéricos. La codificación en la primera dimensión produce un arreglo codificado que coincide con la forma de la salida de la red.

function [X,T] = preprocessMiniBatch(dataX,dataT) % Preprocess predictors. X = preprocessMiniBatchPredictors(dataX); % Extract label data from cell and concatenate. T = cat(2,dataT{1:end}); % One-hot encode labels. T = onehotencode(T,1); end

Función de preprocesamiento de predictores de minilotes

La función preprocessMiniBatchPredictors preprocesa un minilote de predictores extrayendo los datos de imagen del arreglo de celdas de entrada y concatenando el resultado en un arreglo numérico. Para entradas en escala de grises, la concatenación sobre la cuarta dimensión añade una tercera dimensión a cada imagen, para usarla como dimensión de canal única.

function X = preprocessMiniBatchPredictors(dataX) % Concatenate. X = cat(4,dataX{1:end}); end

Argumentos de entrada

Nombre del archivo de modelo de PyTorch, especificado como vector de caracteres o escalar de cadena. modelfile debe estar en la carpeta actual o debe incluir una ruta completa o relativa al archivo. El modelo de PyTorch debe preentrenarse y rastrearse sobre una iteración de inferencia.

Para obtener más información sobre cómo rastrear un modelo de PyTorch, consulte https://pytorch.org/docs/stable/generated/torch.jit.trace.html y Rastrear y guardar un modelo de PyTorch entrenado.

Ejemplo: "mobilenet_v3.pt"

Argumentos de par nombre-valor

Especifique pares de argumentos opcionales como Name1=Value1,...,NameN=ValueN, donde Name es el nombre del argumento y Value es el valor correspondiente. Los argumentos de nombre-valor deben aparecer después de otros argumentos. Sin embargo, el orden de los pares no importa.

Ejemplo: importNetworkFromPyTorch(modelfile,Namespace="CustomLayers") importa la red en modelfile y guarda el espacio de nombres de las capas personalizadas + en la carpeta actual.Namespace

Nombre del espacio de nombres de las capas personalizadas en el que importNetworkFromPyTorch guarda las capas personalizadas, especificado como vector de caracteres o escalar de cadena. importNetworkFromPyTorch guarda el espacio de nombres + de las capas personalizas en la carpeta actual. Si no especifica NamespaceNamespace, la función importNetworkFromPyTorch guarda las capas personalizadas en el espacio de nombres + de la carpeta actual. Para obtener más información sobre los espacios de nombres, consulte Create Namespaces.modelfile

importNetworkFromPyTorch intenta generar una capa personalizada cuando se importa una capa de PyTorch personalizada o cuando el software no puede convertir una capa de PyTorch en una capa de MATLAB® integrada equivalente. importNetworkFromPyTorch guarda cada capa personalizada generada en un archivo de código de MATLAB independiente en +. Para visualizar o editar una capa personalizada, abra el archivo de código de MATLAB asociado. Para obtener más información sobre las capas personalizadas, consulte Capas personalizadas.Namespace

El espacio de nombres + también puede contener el espacio de nombres Namespace+ops interno. Este espacio de nombres interno contiene funciones de MATLAB correspondientes a operadores de PyTorch que usan las capas personalizadas generadas automáticamente. importNetworkFromPyTorch guarda la función de MATLAB asociada a cada operador en un archivo de código de MATLAB independiente en el espacio de nombres interno +ops. Las funciones de objeto de dlnetwork, como la función predict, usan estos operadores cuando interactúa con las capas personalizadas. El espacio de nombres interno de +ops también puede contener funciones de marcador de posición. Para obtener más información, consulte Funciones de marcador de posición.

Ejemplo: Namespace="mobilenet_v3"

Tamaños de dimensión de las entradas de red de PyTorch, especificados como arreglo numérico, escalar de cadena o arreglo de celdas. El orden de entrada de dimensión es el mismo que en la red de PyTorch. Puede especificar PyTorchInputSizes como un arreglo numérico solo cuando la red tenga una sola entrada no escalar. Si la red tiene varias entradas, PyTorchInputSizes debe ser un arreglo de celdas de los tamaños de entrada. Para una entrada cuyo tamaño o forma se desconoce, especifique PyTorchInputSize como "unknown". Para una entrada que se corresponde con un escalar de 0 dimensiones en PyTorch, especifique PyTorchInputSize como "scalar".

Las capas de entrada estándar que importNetworkFromPyTorch admite son ImageInputLayer (SSCB), FeatureInputLayer (CB), ImageInputLayer3D (SSSCB) y SequenceInputLayer (CBT). En este caso, S es espacial, C es canal, B es lote y T es tiempo. importNetworkFromPyTorch también admite entradas no estándar con PyTorchInputSizes. Por ejemplo, importe la red y especifique los tamaños de dimensión de entrada con esta llamada a la función: net = importNetworkFromPyTorch("nonStandardModel.pt",PyTorchInputSizes=[1 3 224]). Después, inicialice la red con un objeto dlarray etiquetado con U, donde U significa desconocido, con estas llamadas a la función: X = dlarray(rand(1 3 224),"UUU") y net = initialize(net,X). El software interpreta que dlarray con la etiqueta U son datos en el orden de PyTorch.

Ejemplo: PyTorchInputSizes=[NaN 3 224 224] es una red con una entrada que es un lote de imágenes.

Ejemplo: PyTorchInputSizes={[NaN 3 224 224],"unknown"} es una red con dos entradas. La primera entrada es un lote de imágenes y la segunda entrada tiene un tamaño desconocido.

Tipos de datos: numeric array | string | cell array

Representación de la composición de red, especificada como uno de estos valores:

"networklayer": representa la composición de red en la red importada con objetos de capanetworkLayer. Cuando especifica este valor, el software convierte tantas funciones de PyTorch como sea posible en capas de Deep Learning Toolbox, con la restricción de que el número de capas personalizadas no aumente."customlayer": representa la composición de red en la red importada con capas personalizadas anidadas. Cuando especifica este valor,importNetworkFromPyTorchconvierte secuencias de funciones de PyTorch en funciones de Deep Learning Toolbox antes de consolidarlas en una capa personalizada. Para obtener más información sobre las capas personalizadas, consulte Definir capas de deep learning personalizadas.

Ejemplo: PreferredNestingType="customlayer"

Tipos de datos: char | string

Argumentos de salida

Red de PyTorch preentrenada, devuelta como un objeto dlnetwork sin inicializar.

Si edita la capa de entrada de la red sin usar la función

addInputLayer, debe actualizar la propiedadInputNamesde la red. Si edita la capa de salida, debe actualizarOutputNames. Para ver un ejemplo, consulte Entrenar una red importada de PyTorch para clasificar imágenes nuevas.Antes de usar una red importada, debe añadir una capa de entrada o inicializar la red. Para ver ejemplos, consulte Importar redes de PyTorch y añadir capas de entrada y Importar redes de PyTorch e inicializar.

Limitaciones

La función

importNetworkFromPyTorchpuede importar la mayoría de (aunque no todas) las redes creadas en versiones de PyTorch distintas a la versión 2.0. La función es totalmente compatible con la versión 2.0 de PyTorch.La función

importNetworkFromPyTorchno admite modelos de detección de objetos de PyTorch que contienen operadores de Torchvision.

Más acerca de

La función importNetworkFromPyTorch admite las capas, las funciones y los operadores de PyTorch enumerados en esta sección para su conversión en capas y funciones de MATLAB integradas con soporte de dlarray. Para obtener más información sobre las funciones que operan en objetos dlarray, consulte List of Functions with dlarray Support. El proceso de conversión suele tener limitaciones.

Esta tabla muestra la correspondencia entre capas de PyTorch y capas de Deep Learning Toolbox. En algunos casos, cuando importNetworkFromPyTorch no puede convertir una capa de PyTorch en una capa de MATLAB, el software convierte la capa de PyTorch en una función de Deep Learning Toolbox con el soporte de dlarray.

| Capa de PyTorch | Capa de Deep Learning Toolbox correspondiente | Función de Deep Learning Toolbox alternativa |

|---|---|---|

torch.nn.AdaptiveAvgPool2d | adaptiveAveragePooling2dLayer | pyAdaptiveAvgPool2d |

torch.nn.AvgPool1d | averagePooling1dLayer | pyAvgPool1d |

torch.nn.AvgPool2d | averagePooling2dLayer | No se aplica |

torch.nn.BatchNorm2d | batchNormalizationLayer | No se aplica |

torch.nn.Conv1d | convolution1dLayer | pyConvolution |

torch.nn.Conv2d | convolution2dLayer | No se aplica |

torch.nn.ConvTranspose1d | transposedConv1dLayer | pyConvolution |

torch.nn.ConvTranspose2d | transposedConv2dLayer | pyConvolution |

torch.nn.Dropout | dropoutLayer | No se aplica |

torch.nn.Dropout2d | spatialDropoutLayer | pyFeatureDropout |

torch.nn.Embedding | No se aplica | pyEmbedding |

torch.nn.GELU | geluLayer | pyGelu |

torch.nn.GLU | No se aplica | pyGLU |

torch.nn.GroupNorm | groupNormalizationLayer | No se aplica |

torch.nn.LayerNorm | layerNormalizationLayer | No se aplica |

torch.nn.LSTM | lstmLayer | No se aplica |

torch.nn.LeakyReLU | leakyReluLayer | pyLeakyRelu |

torch.nn.Linear | fullyConnectedLayer | pyLinear |

torch.nn.MaxPool1d | maxPooling1dLayer | pyMaxPool1d |

torch.nn.MaxPool2d | maxPooling2dLayer | No se aplica |

torch.nn.MultiheadAttention |

| No se aplica |

torch.nn.PReLU | preluLayer | pyPReLU |

torch.nn.ReLU | reluLayer | relu |

torch.nn.SiLU | swishLayer | pySilu |

torch.nn.Sigmoid | sigmoidLayer | pySigmoid |

torch.nn.Softmax | nnet.pytorch.layer.SoftmaxLayer | pySoftmax |

torch.nn.Tanh | tanhLayer | tanh |

torch.nn.Upsample | resize2dLayer (Image Processing Toolbox) | pyUpsample2d (requiere Image Processing Toolbox™) |

torch.nn.UpsamplingNearest2d | resize2dLayer (Image Processing Toolbox) | pyUpsample2d (requiere Image Processing Toolbox) |

torch.nn.UpsamplingBilinear2d | resize2dLayer (Image Processing Toolbox) | pyUpsample2d (requiere Image Processing Toolbox) |

Esta tabla muestra la correspondencia entre capas transformadoras de Hugging Face® de Python® y capas de Deep Learning Toolbox.

Capas transformadoras de Hugging Face de Python | Capa de Deep Learning Toolbox correspondiente |

|---|---|

transformers.models.bert.modeling_bert.BertAttention |

Si la capa de |

transformers.models.bert.modeling_bert.RobertaSelfAttention |

Si la capa de |

transformers.models.distilbert.modeling_distilbert.MultiheadSelfAttention |

|

Esta tabla muestra la correspondencia entre las funciones de PyTorch y las capas y funciones de Deep Learning Toolbox. El valor del argumento nombre-valor PreferredNestingType determina si importNetworkFromPyTorch convierte una función de PyTorch en una capa o una función.

| Función PyTorch | Capa de Deep Learning Toolbox correspondiente | Función de Deep Learning Toolbox correspondiente |

|---|---|---|

torch.nn.functional.adaptive_avg_pool2d | adaptiveAveragePooling2dLayer | pyAdaptiveAvgPool2d |

torch.nn.functional.avg_pool1d | averagePooling1dLayer | pyAvgPool1d |

torch.nn.functional.avg_pool2d | averagePooling2dLayer | pyAvgPool2d |

torch.nn.functional.conv1d | convolution1dLayer | pyConvolution |

torch.nn.functional.conv2d | convolution2dLayer | pyConvolution |

torch.nn.functional.dropout | dropoutLayer | pyDropout |

torch.nn.functional.embedding | No se aplica | pyEmbedding |

torch.nn.functional.gelu | geluLayer | pyGelu |

torch.nn.functional.glu | No se aplica | pyGLU |

torch.nn.functional.hardsigmoid | No se aplica | pyHardSigmoid |

torch.nn.functional.hardswish | No se aplica | pyHardSwish |

torch.nn.functional.layer_norm | layerNormalizationLayer | pyLayerNorm |

torch.nn.functional.leaky_relu | leakyReluLayer | pyLeakyRelu |

torch.nn.functional.linear | fullyConnectedLayer | pyLinear |

torch.nn.functional.log_softmax | No se aplica | pyLogSoftmax |

torch.nn.functional.pad | No se aplica | pyPad |

torch.nn.functional.max_pool1d | maxPooling1dLayer | pyMaxPool1d |

torch.nn.functional.max_pool2d | maxPooling2dLayer | pyMaxPool2d |

torch.nn.functional.prelu | preluLayer | pyPReLU |

torch.nn.functional.relu | reluLayer | relu |

torch.nn.functional.silu | swishLayer | pySilu |

torch.nn.functional.softmax | nnet.pytorch.layer.SoftmaxLayer | pySoftmax |

torch.nn.functional.tanh | tanhLayer | tanh |

torch.nn.functional.upsample | resize2dLayer (Image Processing Toolbox) | pyUpsample2d (requiere Image Processing Toolbox) |

Esta tabla muestra la correspondencia entre los operadores matemáticos de PyTorch y las funciones de Deep Learning Toolbox. La función importNetworkFromPyTorch primero trata de convertir el operador cat PyTorch en una capa de concatenación y, después, en una función.

| Operador de PyTorch | Capa o función de Deep Learning Toolbox correspondiente | Función de Deep Learning Toolbox alternativa |

|---|---|---|

+, -, / | pyElementwiseBinary | No se aplica |

torch.abs | pyAbs | No se aplica |

torch.arange | pyArange | No se aplica |

torch.argmax | pyArgMax | No se aplica |

torch.baddbmm | pyBaddbmm | No se aplica |

torch.bitwise_not | pyBitwiseNot | No se aplica |

torch.bmm | pyMatMul | No se aplica |

torch.cat | concatenationLayer | pyConcat |

torch.chunk | pyChunk | No se aplica |

torch.clamp_min | pyClampMin | No se aplica |

torch.clone | identityLayer | No se aplica |

torch.concat | pyConcat | No se aplica |

torch.cos | pyCos | No se aplica |

torch.cumsum | pyCumsum | No se aplica |

torch.detach | pyDetach | No se aplica |

torch.eq | pyEq | No se aplica |

torch.floor_div | pyElementwiseBinary | No se aplica |

torch.gather | pyGather | No se aplica |

torch.ge | pyGe | No se aplica |

torch.matmul | pyMatMul | No se aplica |

torch.max | pyMaxBinary/pyMaxUnary | No se aplica |

torch.mean | pyMean | No se aplica |

torch.mul, * | multiplicationLayer | pyElementwiseBinary |

torch.norm | pyNorm | No se aplica |

torch.permute | pyPermute | No se aplica |

torch.pow | pyElementwiseBinary | No se aplica |

torch.remainder | pyRemainder | No se aplica |

torch.repeat | pyRepeat | No se aplica |

torch.repeat_interleave | pyRepeatInterleave | No se aplica |

torch.reshape | pyView | No se aplica |

torch.rsqrt | pyRsqrt | No se aplica |

torch.size | pySize | No se aplica |

torch.sin | pySin | No se aplica |

torch.split | pySplitWithSizes | No se aplica |

torch.sqrt | pyElementwiseBinary | No se aplica |

torch.square | pySquare | No se aplica |

torch.squeeze | pySqueeze | No se aplica |

torch.stack | pyStack | No se aplica |

torch.sum | pySum | No se aplica |

torch.t | pyT | No se aplica |

torch.to | pyTo | No se aplica |

torch.transpose | pyTranspose | No se aplica |

torch.unsqueeze | pyUnsqueeze | No se aplica |

torch.zeros | pyZeros | No se aplica |

torch.zeros_like | pyZerosLike | No se aplica |

Esta tabla muestra la correspondencia entre los operadores de matriz de PyTorch y las funciones de Deep Learning Toolbox.

| Operador de PyTorch | Función u operador de Deep Learning Toolbox correspondiente |

|---|---|

Indexación (por ejemplo, X[:,1]) | pySlice |

torch.tensor.contiguous | = |

torch.tensor.expand | pyExpand |

torch.tensor.expand_as | pyExpandAs |

torch.tensor.masked_fill | pyMaskedFill |

torch.tensor.select | pySlice |

torch.tensor.view | pyView |

Cuando la función importNetworkFromPyTorch no puede convertir una capa de PyTorch en una capa de MATLAB integrada o generar una capa personalizada con funciones de MATLAB asociadas, la función crea una capa personalizada con una función de marcador de posición. Debe completar la función de marcador de posición antes de poder usar la red.

Este fragmento de código define la capa personalizada con la función de marcador de posición pyAtenUnsupportedOperator.

classdef UnsupportedOperator < nnet.layer.Layer function [output] = predict(obj,arg1) % Placeholder function for aten::<unsupportedOperator> output= pyAtenUnsupportedOperator(arg1,params); end end

importNetworkFromPyTorch acepta modelos de PyTorch rastreados y preentrenados. Rastree el modelo usando el comando torch.jit.trace() antes de guardarlo. Después, guarde el modelo rastreado usando el método save. El siguiente código muestra un ejemplo de cómo rastrear y guardar un modelo de PyTorch utilizando entradas X de ejemplo. El modelo de PyTorch de este ejemplo acepta entradas de tamaño (1,3,224,224).

# Ensure the layers are set to inference mode.

model.eval()

# Move the model to the CPU.

model.to("cpu")

# Generate input data.

X = torch.rand(1,3,224,224)

# Trace the model.

traced_model = torch.jit.trace(model.forward, X)

# Save the traced model.

traced_model.save('myModel.pt')

Sugerencias

Para usar una red preentrenada para predicción o transferencia del aprendizaje en imágenes nuevas, debe preprocesar las imágenes del mismo modo que las imágenes que use para entrenar el modelo importado. Los pasos de preprocesamiento más habituales son modificar el tamaño de las imágenes, restar los valores promedio de la imagen y convertir las imágenes de formato BGR a RGB.

Para obtener más información sobre las imágenes de preprocesamiento para entrenamiento y predicción, consulte Preprocesar imágenes para deep learning.

No es posible acceder a los miembros del espacio de nombres

+si la carpeta principal del espacio de nombres no está en la ruta de MATLAB. Para obtener más información, consulte Namespaces and the MATLAB Path.NamespaceMATLAB usa la indexación de base uno, mientras que Python usa la indexación de base cero. Es decir, el primer elemento de un arreglo tiene un índice de 1 y 0 en MATLAB y Python, respectivamente. Para obtener más información sobre la indexación de MATLAB, consulte Indexación de arreglos. En MATLAB, para usar un arreglo de índices (

ind) creado en Python, convierta el arreglo enind+1.Si encuentra un conflicto de biblioteca de Python, utilice la función

pyenvpara especificar el argumento nombre-valorExecutionModecomo"OutOfProcess".Para ver más consejos, consulte Tips on Importing Models from TensorFlow, PyTorch, and ONNX.

Algoritmos

La función importNetworkFromPyTorch importa una capa de PyTorch en MATLAB siguiendo por orden estos pasos:

La función trata de importar la capa de PyTorch como una capa de MATLAB integrada. Para obtener más información, consulte Conversión de capas de PyTorch.

La función trata de importar la capa de PyTorch como una función de MATLAB integrada. Para obtener más información, consulte Conversión de capas de PyTorch.

La función trata de importar la capa de PyTorch como una capa personalizada. La función

importNetworkFromPyTorchguarda las capas personalizadas generadas y las funciones asociadas en el espacio de nombres+. Para ver un ejemplo, consulte Importar redes de PyTorch y encontrar capas personalizadas generadas.NamespaceLa función importa la capa de PyTorch como una capa personalizada con una función de marcador de posición. Debe completar la función de marcador de posición antes de poder usar la red; consulte Funciones de marcador de posición.

En los tres primeros casos, la red importada está lista para la predicción después de inicializarla.

Funcionalidad alternativa

App

También puede importar redes de plataformas externas utilizando la app Deep Network Designer. La app utiliza la función importNetworkFromPyTorch para importar la red y muestra un cuadro de diálogo de progreso. Durante el proceso de importación, la app añade una capa de entrada a la red, si es posible, y muestra un informe de importación que incluye detalles sobre los problemas que requieren atención. Después de importar una red, puede editarla, visualizarla y analizarla de manera interactiva. Cuando termine de editar la red, puede exportarla a Simulink® o generar código de MATLAB para construir redes.

Bloque

También puede trabajar con redes de PyTorch utilizando el bloque PyTorch Model Predict. Este bloque también permite cargar funciones de Python para preprocesar o posprocesar datos, así como configurar puertos de entrada y salida de manera interactiva.

Historial de versiones

Introducido en R2022bimportNetworkFromPyTorch establece el orden de las entradas y salidas usando las propiedades InputNames y OutputNames del objeto dlnetwork.

Cuando actualice las entradas de red sin usar la función

addInputLayer, debe actualizar también la propiedadInputNames.Cuando actualice las salidas de red, debe actualizar también la propiedad

OutputNames.

Cuando importe una red de PyTorch, importNetworkFromPyTorch convierte una función de PyTorch en una capa de Deep Learning Toolbox si se cumplen las siguientes condiciones:

El argumento nombre-valor

PreferredNestingTypees"networklayer".La función de PyTorch tiene una capa de Deep Learning Toolbox equivalente.

La función de PyTorch está al principio de la red, sigue a una capa de PyTorch o va detrás de una capa de PyTorch que se ha convertido en una capa de Deep Learning Toolbox.

importNetworkFromPyTorch consolida una secuencia de funciones de Deep Learning Toolbox convertidas desde funciones de PyTorch en una capa personalizada. El software minimiza el número de capas personalizadas de la red.

En versiones anteriores, importNetworkFromPyTorch convertía todas las funciones de PyTorch a funciones de Deep Learning Toolbox.

Puede importar las siguientes capas y funciones de PyTorch en capas de Deep Learning Toolbox:

torch.nn.AvgPool1dtorch.nn.LSTMtorch.nn.MaxPool1dtorch.nn.MultiheadAttentiontorch.nn.PReLUtorch.nn.functional.avg_pool1dtorch.nn.functional.max_pool1dtorch.nn.functional.upsample

También puede especificar el argumento nombre-valor PyTorchInputSizes para importar las siguientes capas de PyTorch como capas de Deep Learning Toolbox en lugar de capas personalizadas:

torch.clonetorch.nn.Dropouttorch.nn.GELUtorch.nn.LeakyReLUtorch.nn.ReLUtorch.nn.Sigmoidtorch.nn.SiLUtorch.nn.Tanh

Puede importar las siguientes capas de Hugging Face en capas de Deep Learning Toolbox:

transformers.models.bert.modeling_bert.BertAttentiontransformers.models.bert.modeling_bert.RobertaSelfAttentiontransformers.models.distilbert.modeling_distilbert.MultiheadSelfAttention

Puede importar una red que usa objetos networkLayer para representar la composición de red. Para especificar si la red importada representa una composición usando objetos networkLayer u objetos de capa personalizados, utilice el argumento nombre-valor PreferredNestingType. Para obtener más información, consulte Deep Learning Network Composition.

Puede importar el siguiente operador y capas de PyTorch en capas de Deep Learning Toolbox:

torch.clonetorch.nn.AdaptiveAvgPool2dtorch.nn.Dropout2Dtorch.nn.PReLU

También puede importar los siguientes operadores, funciones y capas de PyTorch en capas personalizadas:

torch.abstorch.arangetorch.baddbmmtorch.bitwise_nottorch.costorch.cumsumtorch.getorch.remaindertorch.repeat_interleavetorch.sintorch.rsqrttorch.zeros_liketorch.nn.functional.padtorch.nn.functional.gluytorch.nn.GLU

Puede importar una red rastreada de PyTorch 2.0. Anteriormente, importNetworkFromPyTorch permitía importar redes creadas usando las versiones 1.10.0 y anteriores de PyTorch.

Ahora puede importar una red de PyTorch que incluye las capas torch.nn.Embedding y torch.nn.tanh.

Ahora puede importar una red de PyTorch que incluye las funciones torch.functional.embedding y torch.functional.tanh.

Ahora puede importar una red de PyTorch que incluya los operadores torch.eq y torch.tensor.masked_fill.

importNetworkFromPyTorch permite importar modelos de PyTorch con vinculación de peso.

importNetworkFromPyTorch permite importar modelos de PyTorch con reparto de peso.

importNetworkFromPyTorch permite especificar tamaños de dimensión de las entradas de red de PyTorch. Especifique los tamaños de entrada con el argumento nombre-valor PyTorchInputSizes.

Consulte también

importNetworkFromONNX | importNetworkFromTensorFlow | exportONNXNetwork | exportNetworkToTensorFlow | dlnetwork | dlarray | addInputLayer

MATLAB Command

You clicked a link that corresponds to this MATLAB command:

Run the command by entering it in the MATLAB Command Window. Web browsers do not support MATLAB commands.

Seleccione un país/idioma

Seleccione un país/idioma para obtener contenido traducido, si está disponible, y ver eventos y ofertas de productos y servicios locales. Según su ubicación geográfica, recomendamos que seleccione: .

También puede seleccionar uno de estos países/idiomas:

Cómo obtener el mejor rendimiento

Seleccione China (en idioma chino o inglés) para obtener el mejor rendimiento. Los sitios web de otros países no están optimizados para ser accedidos desde su ubicación geográfica.

América

- América Latina (Español)

- Canada (English)

- United States (English)

Europa

- Belgium (English)

- Denmark (English)

- Deutschland (Deutsch)

- España (Español)

- Finland (English)

- France (Français)

- Ireland (English)

- Italia (Italiano)

- Luxembourg (English)

- Netherlands (English)

- Norway (English)

- Österreich (Deutsch)

- Portugal (English)

- Sweden (English)

- Switzerland

- United Kingdom (English)