Differential Equations and Linear Algebra, 6.5: Symmetric Matrices, Real Eigenvalues, Orthogonal Eigenvectors

From the series: Differential Equations and Linear Algebra

Gilbert Strang, Massachusetts Institute of Technology (MIT)

Published: 27 Jan 2016

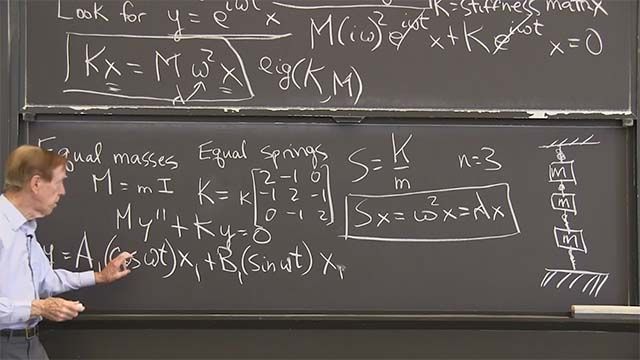

OK. So this is a "prepare the way" video about symmetric matrices and complex matrices. We'll see symmetric matrices in second order systems of differential equations.

Symmetric matrices are the best. They have special properties, and we want to see what are the special properties of the eigenvalues and the eigenvectors? And I guess the title of this lecture tells you what those properties are. So if a matrix is symmetric-- and I'll use capital S for a symmetric matrix-- the first point is the eigenvalues are real, which is not automatic. But it's always true if the matrix is symmetric. And the second, even more special point is that the eigenvectors are perpendicular to each other. Different eigenvectors for different eigenvalues come out perpendicular. Those are beautiful properties. They pay off.

So that's the symmetric matrix, and that's what I just said. Real lambda, orthogonal x.

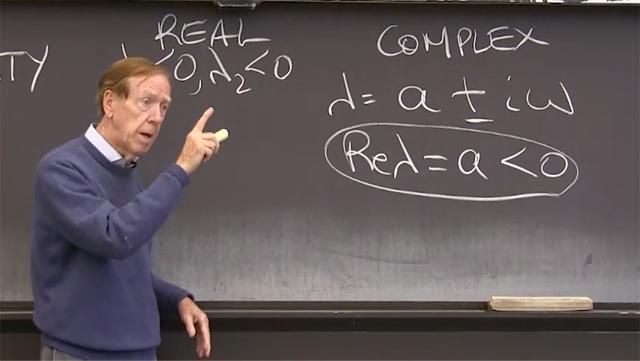

Also, we could look at antisymmetric matrices. The transpose is minus the matrix. In that case, we don't have real eigenvalues. In fact, we are sure to have pure, imaginary eigenvalues. I times something on the imaginary axis.

But again, the eigenvectors will be orthogonal. However, they will also be complex. When we have antisymmetric matrices, we get into complex numbers. Can't help it, even if the matrix is real.

And then finally is the family of orthogonal matrices. And those matrices have eigenvalues of size 1, possibly complex. But the magnitude of the number is 1. And again, the eigenvectors are orthogonal. This is the great family of real, imaginary, and unit circle for the eigenvalues.

OK. I want to do examples. So I'll just have an example of every one.

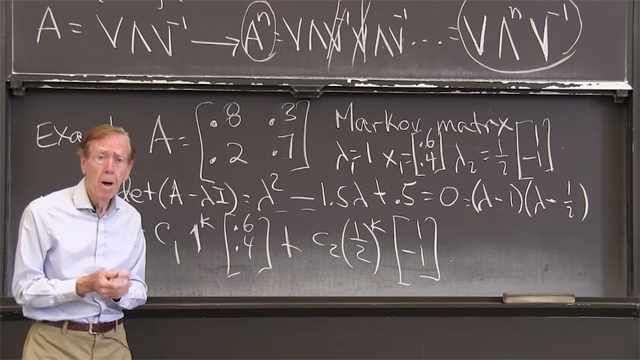

So there's a symmetric matrix. There's a antisymmetric matrix. If I transpose it, it changes sign. Here is a combination, not symmetric, not antisymmetric, but still a good matrix. And there is an orthogonal matrix, orthogonal columns. And those columns have length 1. That's why I've got the square root of 2 in there.

So these are the special matrices here. Can I just draw a little picture of the complex plane? There is the real axis. Here is the imaginary axis. And here's the unit circle, not greatly circular but close.

Now-- eigenvalues are on the real axis when S transpose equals S. They're on the imaginary axis when A transpose equals minus A. And they're on the unit circle when Q transpose Q is the identity. Q transpose is Q inverse in this case. Q transpose is Q inverse. Here the transpose is the matrix. Here the transpose is minus the matrix. And you see the beautiful picture of eigenvalues, where they are. And the eigenvectors for all of those are orthogonal. Let me find them.

Here that symmetric matrix has lambda as 2 and 4. The trace is 6. The determinant is 8. That's the right answer. Lambda equal 2 and 4. And x would be 1 and minus 1 for 2. And for 4, it's 1 and 1.

Orthogonal. Orthogonal eigenvectors-- take the dot product of those, you get 0 and real eigenvalues.

What about A? Antisymmetric. The equation I-- when I do determinant of lambda minus A, I get lambda squared plus 1 equals 0 for this one. That leads me to lambda squared plus 1 equals 0. So that gives me lambda is i and minus i, as promised, on the imaginary axis. And I guess that that matrix is also an orthogonal matrix. And those eigenvalues, i and minus i, are also on the circle. So that A is also a Q. OK.

What are the eigenvectors for that? I think that the eigenvectors turn out to be 1 i and 1 minus i. Oh. Those are orthogonal. I'll have to tell you about orthogonality for complex vectors. Let me complete these examples.

What about the eigenvalues of this one? Well, that's an easy one. Can you connect that to A? B is just A plus 3 times the identity-- to put 3's on the diagonal. So I'm expecting here the lambdas are-- if here they were i and minus i. All I've done is add 3 times the identity, so I'm just adding 3. I'm shifting by 3. I'll have 3 plus i and 3 minus i. And the same eigenvectors.

So that's a complex number. That matrix was not perfectly antisymmetric. It's not perfectly symmetric. So that gave me a 3 plus i somewhere not on the axis or that axis or the circle. Out there-- 3 plus i and 3 minus i.

And finally, this one, the orthogonal matrix. What are the eigenvalues of that? Let's see. I can see-- here I've added 1 times the identity, just added the identity to minus 1, 1. So again, I have this minus 1, 1 plus the identity. So I would have 1 plus i and 1 minus i from the matrix. And now I've got a division by square root of 2, square root of 2. And those numbers lambda-- you recognize that when you see that number, that is on the unit circle. Where is it on the unit circle? 1 plus i. 1 plus i over square root of 2. Square root of 2 brings it down there. There's 1. There's i. Divide by square root of 2. That puts us on the circle. That's 1 plus i over square root of 2. And here is 1 plus i, 1 minus i over square root of two. Complex conjugates.

When I say "complex conjugate," that means I change every i to a minus i. I flip across the real axis. I'd want to do that in a minute.

So are there more lessons to see for these examples? Again, real eigenvalues and real eigenvectors-- no problem. Here, imaginary eigenvalues. Here, complex eigenvalues. Here, complex eigenvalues on the circle. On the circle. OK. And each of those facts that I just said about the location of the eigenvalues-- it has a short proof, but maybe I won't give the proof here. It's the fact that you want to remember.

Can I bring down again, just for a moment, these main facts? Real, from symmetric-- imaginary, from antisymmetric-- magnitude 1, from orthogonal. OK.

Now I feel I've talking about complex numbers, and I really should say-- I should pay attention to that. Complex numbers. So I have lambda as a plus ib.

What do I mean by the "magnitude" of that number? What's the magnitude of lambda is a plus ib? Again, I go along a, up b. Here is the lambda, the complex number. And I want to know the length of that. Well, everybody knows the length of that. Thank goodness Pythagoras lived, or his team lived. It's the square root of a squared plus b squared.

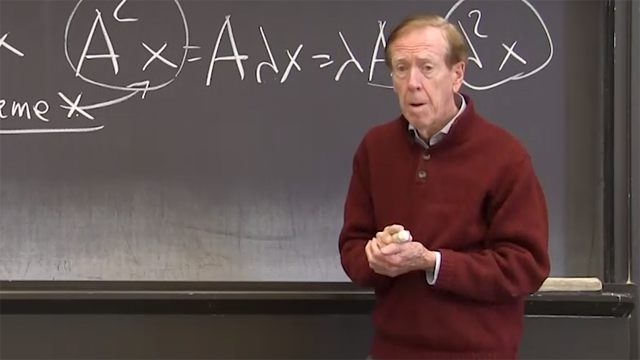

And notice what that-- how do I get that number from this one? It's important. If I multiply a plus ib times a minus ib-- so I have lambda-- that's a plus ib-- times lambda conjugate-- that's a minus ib-- if I multiply those, that gives me a squared plus b squared. So I take the square root, and this is what I would call the "magnitude" of lambda.

So the magnitude of a number is that positive length. And it can be found-- you take the complex number times its conjugate. That gives you a squared plus b squared, and then take the square root. Basic facts about complex numbers.

OK. What about complex vectors? What is the dot product? What is the correct x transpose x? Well, it's not x transpose x. Suppose x is the vector 1 i, as we saw that as an eigenvector. What's the length of that vector? The length of that vector is not 1 squared plus i squared. 1 squared plus i squared would be 1 plus minus 1 would be 0.

The length of that vector is the size of this squared plus the size of this squared, square root. Here we go. The length of x squared-- the length of the vector squared-- will be the vector. As always, I can find it from a dot product. But I have to take the conjugate of that. If I want the length of x, I have to take-- I would usually take x transpose x, right? If I have a real vector x, then I find its dot product with itself, and Pythagoras tells me I have the length squared.

But if the things are complex-- I want minus i times i. I want to get lambda times lambda bar. I want to get a positive number. Minus i times i is plus 1. Minus i times i is plus 1. So I must, must do that.

So that's really what "orthogonal" would mean. "Orthogonal complex vectors" mean-- "orthogonal vectors" mean that x conjugate transpose y is 0. That's what I mean by "orthogonal eigenvectors" when those eigenvectors are complex. I must remember to take the complex conjugate. And I also do it for matrices.

So if I have a symmetric matrix-- S transpose S. I know what that means. But suppose S is complex. Suppose S is complex. Then for a complex matrix, I would look at S bar transpose equal S.

Every time I transpose, if I have complex numbers, I should take the complex conjugate. MATLAB does that automatically. If you ask for x prime, it will produce-- not just it'll change a column to a row with that transpose, that prime. And it will take the complex conjugate.

So we must remember always to do that. Yeah. And in fact, if S was a complex matrix but it had that property-- let me give an example. So here's an S, an example of that. 1, 2, i, and minus i. So I have a complex matrix. And if I transpose it and take complex conjugates, that brings me back to S. And this is called a "Hermitian matrix" among other possible names.

Hermite was a important mathematician. He studied this complex case, and he understood to take the conjugate as well as the transpose. And sometimes I would write it as SH in his honor. So if I want one symbol to do it-- SH. In engineering, sometimes S with a star tells me, take the conjugate when you transpose a matrix.

So that's main facts about-- let me bring those main facts down again-- orthogonal eigenvectors and location of eigenvalues.

Now I'm ready to solve differential equations. Thank you.